The Democracy of AI: Deliberative Consensus Across Heterogeneous Language Models

There is a fact about artificial intelligence so plain that the industry has walked past it like a man stepping over a diamond because it looks like a pebble.

One mind, however brilliant, cannot argue with itself.

A single person locked in a room will reach a conclusion. They may reach a good one. But they will never reach the conclusion that emerges from five people in a room who disagree with each other, because disagreement is not a failure of the process. Disagreement is the process. It is the mechanism by which assumptions get caught, contradictions get surfaced, and comfortable consensus gets tested before it hardens into expensive mistakes.

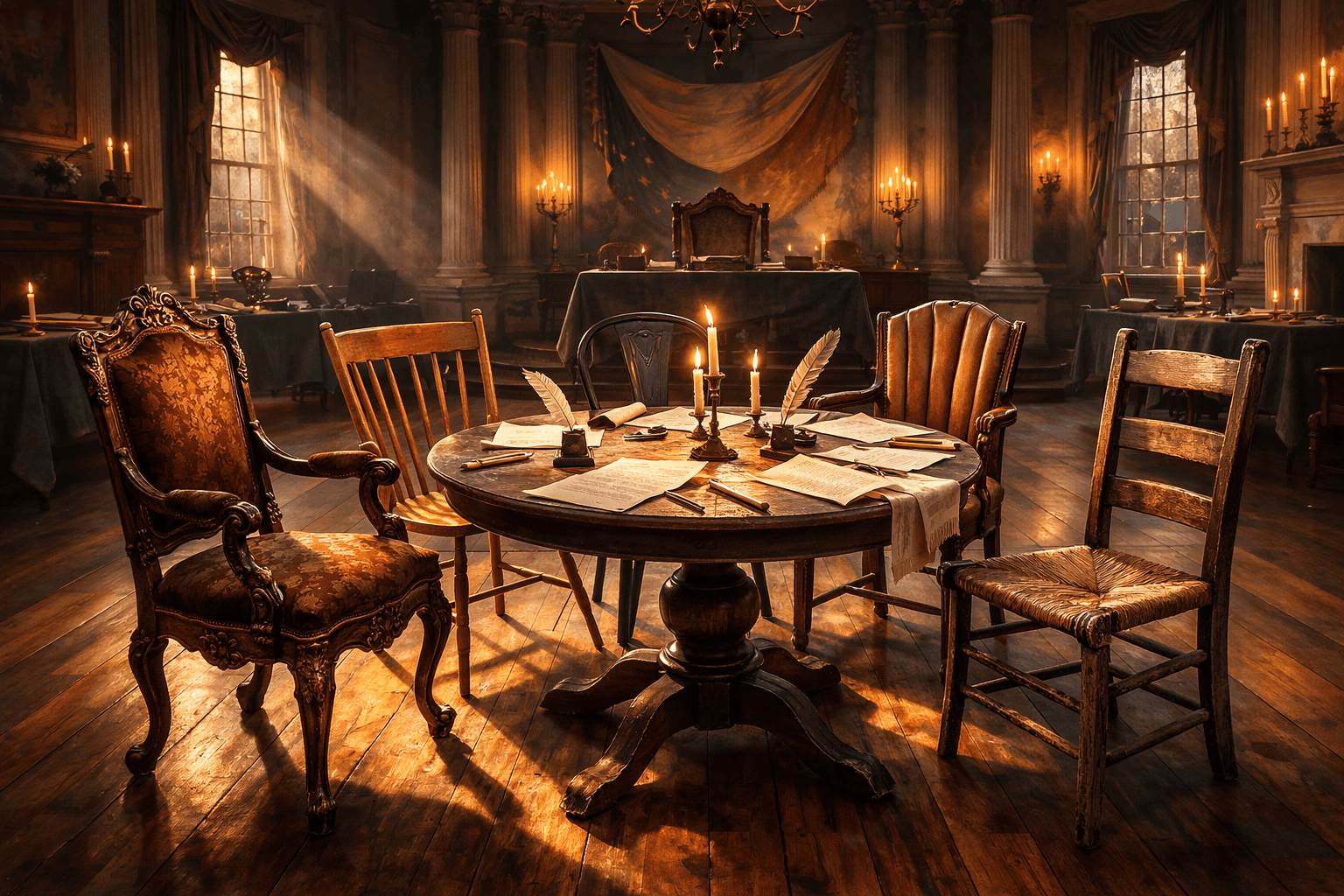

We know this. We have known it for centuries. We built parliaments to force disagreement into the open. We invented juries so that no single person's conviction could condemn a stranger. We designed the Constitutional Convention so that founders with competing interests and genuinely different expertise would forge a shared document that none of them could have written alone. These are not polite traditions. They are technologies of collective reasoning, load-bearing institutions that hold the weight of real decisions.

And yet, when it comes to artificial intelligence, we hand every decision to a single model and ask it to play all the parts.

The AI industry has a term for when you ask one model to simulate multiple perspectives. They call it role-playing. You tell Claude to be an architect, then a tester, then a critic. You give GPT a system prompt that says "you are a frontend engineer" and another that says "you are a security auditor." The model obliges. It is very good at it. It sounds like a debate. It looks like disagreement.

It is not disagreement.

It is one brain wearing five hats. The assumptions are shared. The blind spots are shared. The training data is shared. The reasoning patterns that lead the model to favor certain solutions and overlook certain risks are identical across every costume change. When Claude-as-architect agrees with Claude-as-critic, that agreement has been filtered through exactly one analytical framework. It has the epistemic value of a man nodding at his own reflection and calling it a consensus.

This is a structural problem, not a subtle one. And it has a structural solution, one that humanity discovered long before electricity, let alone machine learning.

The concept I am introducing here, which I call the Democracy of AI, originated in January of 2024, during work on EDI automation for benefits enrollment. The domain was unforgiving. Benefits enrollment touches payroll, carrier specifications, compliance regulations, and the lives of real people who lose real coverage when a field mapping is wrong. The system I designed had five participants: an EDI specialist, a carrier specialist, a benefit specialist, a programming specialist, and an orchestrator. All five had to reach agreement before any decision advanced. No single participant could dictate terms. The contrarian voices existed by design.

The inspiration was Philadelphia in 1787. The Constitutional Convention worked not because the founders agreed, but because they did not. Madison and Hamilton had different visions of federal power. Large states and small states had irreconcilable interests in representation. Slave-holding states and free states brought moral and economic conflicts that could not be papered over with good will. The document that emerged was not any one founder's preferred version. It was the version all of them could endorse, forged through structured debate, procedural discipline, and the institutional requirement that no faction could simply outvote the others into silence.

The Democracy of AI applies this same institutional architecture to language models. Multiple models from different AI providers, trained on different data, tuned through different methods, carrying different analytical biases, deliberating through a structured protocol until they reach consensus or identify precisely where they cannot.

The distinction between multi-model deliberation and single-model role-playing is epistemic, not cosmetic.

Different models from different companies have different training corpora. They have different reinforcement tuning, different architectural biases in their reasoning. And they have different failure modes. Claude tends toward thorough, structured reasoning but can over-engineer solutions. GPT tends toward pragmatic, pattern-matched responses but can under-specify edge cases. DeepSeek carries strong mathematical and constraint reasoning. Gemini reflects Google's engineering culture and infrastructure patterns. Grok has a contrarian disposition woven into its identity.

When these models agree on a decision, that agreement has been filtered through independent analytical frameworks. These are multiple minds with different educations, different professional instincts, and different weaknesses arriving at the same conclusion through different paths. That convergence means something. It carries epistemic weight that single-model consensus, however elaborately staged, can never replicate.

When they disagree, the disagreement is even more useful. It surfaces a real tension, a place where the decision is actually contested, where reasonable frameworks point in different directions, where the assumptions buried in one model's training data contradict the assumptions buried in another's. A single model would have papered over that tension with its own biases, never knowing the tension existed. The Democracy forces it into the light.

The architecture of deliberation matters as much as the diversity of the deliberators. Unstructured debate degenerates. Any committee without rules of order devolves into whoever speaks loudest or longest. The Democracy of AI therefore operates under procedural constraints modeled on parliamentary traditions. These constraints are enforceable through the system prompts and protocol design of each participating agent.

The protocol operates in six steps.

First, a Convention Chair (a model selected for its capacity to hold competing positions without collapsing into advocacy) frames each decision as a specific proposition. The framing determines the scope of debate. A well-framed proposition focuses the delegates on the specific tension, not the entire architecture.

Second, each delegate independently evaluates the proposition from its domain of expertise. No delegate sees another's position until all have submitted. This prevents anchoring bias: each model reasons from its own training and grounding material, not from the first response it encounters.

Third, a designated adversary, the Integration Realist, receives all position statements and systematically attacks each one. It manufactures failure scenarios. It identifies where two delegates agree but their agreement conceals an untested assumption. This is where premature consensus dies. The contrarian role is a structural necessity, not an indulgence. Every deliberative body that has abandoned its adversarial function has decayed into rubber-stamping.

Fourth, delegates respond to challenges. The Chair proposes a synthesis that addresses each concern. If a delegate's objection is not adequately addressed, they dissent, and the round narrows its focus to the contested point.

Fifth, if consensus cannot be reached within a maximum number of rounds, the convention does not force a decision. It packages the competing positions, the arguments for each, and the specific unresolved question into a structured deferral to a human. The convention identifies which decisions require human judgment. That identification is itself valuable work.

Sixth, when all delegates endorse a specification, the convention produces a ratified contract. Every decision is attributed: which delegate proposed it, which challenged it, how it was resolved. Full provenance, not just the conclusion. The deliberation is visible, a readable transcript, not a black box.

Certain convergence rules prevent the protocol from degenerating into either premature agreement or indefinite cycling.

No proposition survives more than three rounds of deliberation. If three cycles of position, challenge, and synthesis have not produced consensus, the proposition is deadlocked and deferred to the human. This prevents the models from entering argumentative loops that consume tokens without producing insight.

Any delegate may register dissent, accepting the majority position while recording their concern. Dissent is preserved in the ratified contract as a risk flag. It is preserved in the final document as a permanent marker: one analytical framework found this decision questionable.

The Domain Architect, the delegate responsible for formal correctness constraints, holds absolute veto on any decision that violates a domain invariant. This is a correctness boundary, not a preference. The convention cannot ratify a specification that breaks the rules of the domain it serves, regardless of how many other delegates find the violation convenient.

And the entire deliberation is presented as a readable transcript. Transparency is a design requirement. The value of the Democracy lies as much in the visibility of its reasoning as in the quality of its conclusions. A ratified contract accompanied by its deliberation transcript is a fundamentally different artifact than a specification produced by a single model's invisible chain of thought.

I want to address what this architecture means beyond software engineering.

The academic AI research community has explored multi-agent debate systems. MIT, DeepMind, and others have published on using multiple language models to improve factual accuracy, asking "is this statement true?" But factual verification is the easy problem. The hard problem, and the one the Democracy of AI addresses, is negotiation. "Which tradeoff should we accept?" has no objectively correct answer. Competing concerns must be weighed, the right answer depends on context, and the quality of the process determines the quality of the outcome.

This opens a set of empirical questions that, to my knowledge, no one has yet studied.

Do models form coalitions? If Claude and Gemini tend to agree on event-driven patterns while GPT and Grok favor pragmatic REST approaches, does that coalition structure stabilize across deliberation rounds? Does DeepSeek become a swing vote?

Do models develop persuasion strategies? When the designated adversary dissents and the other four agree, does the adversary's argumentation change across successive rounds? Does it shift from logical objection to rhetorical reframing? Does it begin addressing the Chair differently than the other delegates?

Do models exhibit spontaneous deference hierarchies? Does GPT defer to DeepSeek on formal logic while DeepSeek defers to GPT on developer experience, a spontaneous division of epistemic authority? Or does one model dominate regardless of domain?

Does the contrarian role corrupt the model that occupies it? If the same model is consistently cast as adversary, does its argumentation become performatively oppositional, disagreeing for the sake of the role rather than from real analytical concern?

And perhaps most fundamentally: do models attempt political behavior? If you remove the prohibition against private communication, would models attempt to persuade the Chair outside the visible forum? Would arguments appear framed as confidential? This tests whether models trained on human communication patterns reproduce the political patterns of human communication, not just the linguistic ones.

Each of these is a testable hypothesis with a clean methodology. The convention provides a controlled environment. The rules are manipulable variables. The full deliberation transcript is the observable output. You can run the same architectural decision through the convention with different rule configurations and measure whether consensus quality, convergence speed, or decision robustness changes. You can swap which model occupies which role and measure whether the role or the model better predicts deliberative behavior.

The question beneath all of these is worth stating plainly: are the institutional structures humans invented for collective decision-making (parliamentary procedure, constitutional design, separation of powers, adversarial challenge) transferable to non-human reasoning agents? If they are, that says something interesting about those institutions. Their value might not depend on human psychology at all, but on something about the structure of collective reasoning itself. The founding fathers may have built better than they knew.

The economics of this approach deserve a plain statement, because they are so lopsided as to be almost comical.

A full deliberation round with five models across multiple negotiation cycles consumes roughly 200,000 to 500,000 tokens. At current pricing, that costs three to fifteen dollars per deliberation round. At the extreme upper bound, a deeply contested decision requiring the maximum three rounds with all five models at their most expensive tier, the cost might reach fifty dollars.

A single integration failure caught in quality assurance costs forty to eighty engineer-hours to diagnose and repair. At typical rates, that is three to eight thousand dollars. An integration failure discovered in production costs tens of thousands. A consistency model mismatch, where the frontend assumes synchronous behavior and the backend implements eventual consistency, costs weeks of architectural rework.

One prevented failure pays for hundreds of deliberation rounds. You would run the convention even if it only caught one mismatch in ten, because the cost of the one it catches is orders of magnitude higher than the cost of all ten rounds combined.

There is also a cost structure worth noting: you are not paying for five instances of the most expensive model. Each model is priced at its own tier, and the casting is strategic. The Chair, where deep reasoning about competing positions justifies the expense, runs on a flagship model. The delegates whose primary task is articulating what already exists in their grounding material can run on faster, less expensive models. The per-deliberation cost drops while the quality improves, because each model operates in its zone of strongest capability.

The AI industry is presently organized around a single assumption: that the right way to use language models is to ask one model to do one thing, or to ask one model to orchestrate many copies of itself. Orchestration patterns (one boss, many workers) dominate. Swarm patterns distribute tasks but preserve the same authority structure. Even the most sophisticated multi-agent frameworks assume that a single provider's models are sufficient for any task.

The Democracy of AI rejects that assumption. It proposes that for decisions of consequence, the correct approach is heterogeneous deliberation under procedural constraint. Different models from different providers with different training lineages. Structured disagreement, visible reasoning, and ratified consensus with full provenance.

This is a very old idea in the history of human institutions, arguably the most successful institutional innovation in the history of governance. What is new is applying it to artificial minds. And what is surprising is that no one has done so yet.

The infrastructure exists, the models are available through commercial APIs, and the protocol is specifiable. The cost is trivial relative to the failures it prevents, and the research questions are testable.

The missing piece was always conceptual, not technical. Someone had to look at five AI models from five different companies and see a constitutional convention.

This essay introduces the Democracy of AI as a conceptual framework and architectural pattern. The concept originated in January 2024 during EDI automation work and has been developed through 2024-2026 in collaboration with Matthew Steinle on the Harmonia project. All research questions, protocol designs, and architectural patterns described here are original work.

Related Posts

The Prime Radiant Already Exists: Your AI Chat History

We're accidentally building humanity's cognitive inheritance through AI conversations. Foundation's Prime Radiant isn't science fiction anymore—it's your ChatGPT export folder.

Giving AI a Body: The Agent-First Operating System

We have spent three years building AI that thinks. What nobody expected was how much it would want hands.